The algorithm of humanity cannot be written in code. It must remain rooted in conscience, responsibility and law

The AI Summit presently underway in India has prompted a moment of professional reflection.

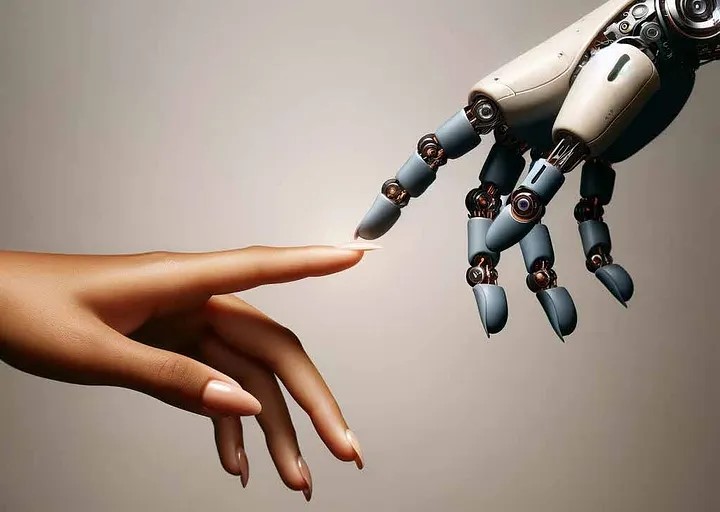

Artificial intelligence is being presented not merely as a technological advance, but as a governing force capable of reorganising work, decision making and institutional design. At such moments of acceleration, reflection becomes necessary. Innovation invites enthusiasm, but it also demands restraint. It is from this awareness that the present article is written.

A deceptively simple proposition has recently gained resonance. Artificial intelligence ought to do the dishes so that humans may do their art, not the reverse. The phrase is not anti-technology, nor is it sentimental. A principle of design is being expressed. Tools are meant to serve human purpose, not displace it. When systems are introduced without clarity of role, technology ceases to be supportive and begins to reorder human life in unintended ways. Efficiency then becomes an end in itself, while meaning is quietly eroded.

The concern raised is not that machines assist human activity. Assistance has always been welcomed. The concern is that substitution is increasingly being normalised even where human judgement, discretion and responsibility are central. When this distinction is blurred, the cost is not merely economic. It is institutional and ethical.

Ergonomics

beyond comfort

The concept of ergonomics is often confined to posture, comfort or physical design. In management studies, however, ergonomics has always been broader. It concerns the alignment of tools and systems with human capability, limitation and purpose. A system may function perfectly and yet remain ergonomically unsound if it displaces activities that are formative rather than mechanical.

From this perspective, artificial intelligence requires careful placement. It performs well when deployed to reduce drudgery, improve safety and assist complex analysis. It becomes misaligned when it replaces tasks that cultivate judgement, responsibility or accountability. Capability alone cannot justify adoption. Appropriateness must be demonstrated.

Not every task that can be automated ought to be automated. Where technology removes the very effort through which competence is developed, an ergonomic failure has occurred. The issue is not resistance to progress. It is insistence upon design discipline.

Innovation is

not a single leap

Innovation studies have long cautioned against treating all change as equivalent. The “innovation hypercube” proposed by Afuah and Bahram (1993) remains instructive. Innovation operates at different levels, product, process, competence and system. Each level carries different risks and rewards. Value is created when intervention occurs at the appropriate level. Value is destroyed when boundaries are ignored.

Artificial intelligence is presently being projected as a system level innovation applicable across all domains. Such framing overlooks context. In logistics and manufacturing, process innovation may be appropriate. In governance, decision support may be beneficial. Problems arise when competence itself is displaced without deliberation. The most disruptive forms of innovation are not always the most desirable. Restraint is as important as ambition.

When innovation collapses multiple levels into a single indiscriminate leap, instability follows. The lesson is not to slow innovation, but to place it correctly.

The warning

from fiction

Popular culture has anticipated this dilemma. In the recent Mission Impossible film, an artificial intelligence known as the Entity is depicted as decentralised, self modifying and capable of rewriting its own code. It operates across devices, evades control and survives through constant adaptation. While cinematic in presentation, the anxiety reflected is not imaginary. It symbolises systems that outgrow the frameworks designed to regulate them.

The warning is not that machines become malicious. The warning is that systems become ungovernable. When control is lost, law becomes reactive rather than preventive. The relevance of the metaphor lies not in its drama, but in its question.

Systems without

accountability

The discussion inevitably reaches governance. Administrative systems are built upon traceability. Decisions are expected to be reasoned, attributable and contestable. Artificial intelligence challenges these assumptions. Outcomes may be generated without explanation. Harm may occur without intention. Identity may be distributed across systems rather than fixed in an individual or institution.

A liability vacuum is thereby created. Traditional frameworks assume an actor capable of intent and punishment. Artificial intelligence fits neither category. Yet consequences remain real. When accountability becomes diffused, deterrence disappears. Responsibility is shifted between developers, users and institutions until it rests nowhere.

This is not a speculative concern. It is a present governance challenge that law must confront.

Who writes the

law for machines

Every significant innovation has required regulation. Regulation is not opposition. It is the architecture of trust. Artificial intelligence presents an unprecedented challenge because it cannot be punished in the conventional sense. It does not possess intent, conscience or fear of sanction. Yet it acts. Harm may be caused without a culpable mind.

The central question therefore arises. Who will define offences committed through artificial intelligence, and who will enforce penalties when systems behave in harmful ways. More importantly, who will write these rules. An artificial intelligence system cannot be permitted to define the limits of its own accountability. It cannot be both actor and judge.

Regulation must therefore be human authored, human enforced and human centred. Systems must remain auditable, interruptible and subordinate to human authority. The moment a system becomes ungovernable, it ceases to be a tool and becomes a threat.

The question is not whether artificial intelligence can innovate faster than human institutions. It already can. The question is whether humanity will retain the authority to set boundaries. Progress does not require surrender. It requires judgement.

If humanity is reduced to an output that can be optimised, efficiency may prevail, but dignity will not. The future will be shaped not by machines that think faster, but by societies that think better. The algorithm of humanity cannot be written in code. It must remain rooted in conscience, responsibility and law.